Akridata Named a Vendor to Watch in the IDC MarketScape for Worldwide Data Labeling Software Learn More

Data Curation for Labeling

The Issue

Often image sensors are video streams which inherently means multiple frames per second (30 FPS – 60 FPS).

If you are trying to build a robust training set, you probably want to have a diverse set of examples that is representative of the scenario you want the model to learn.

Naturally, this implies that if you want a frame in a video sequence, the neighboring 30/60 frames will be nearly identical. Picking identical frames may result in having a less diverse dataset and less impact on model performance.

Primitive methods such as downsampling or random sampling may miss out on valuable information and a hope-based approach.

The Akridata Solution

Here’s how Akridata Data Explorer helps to solve this issue. Let’s review step-by step.

Step 1

Step 2

Step 3

Step 4

Step 1

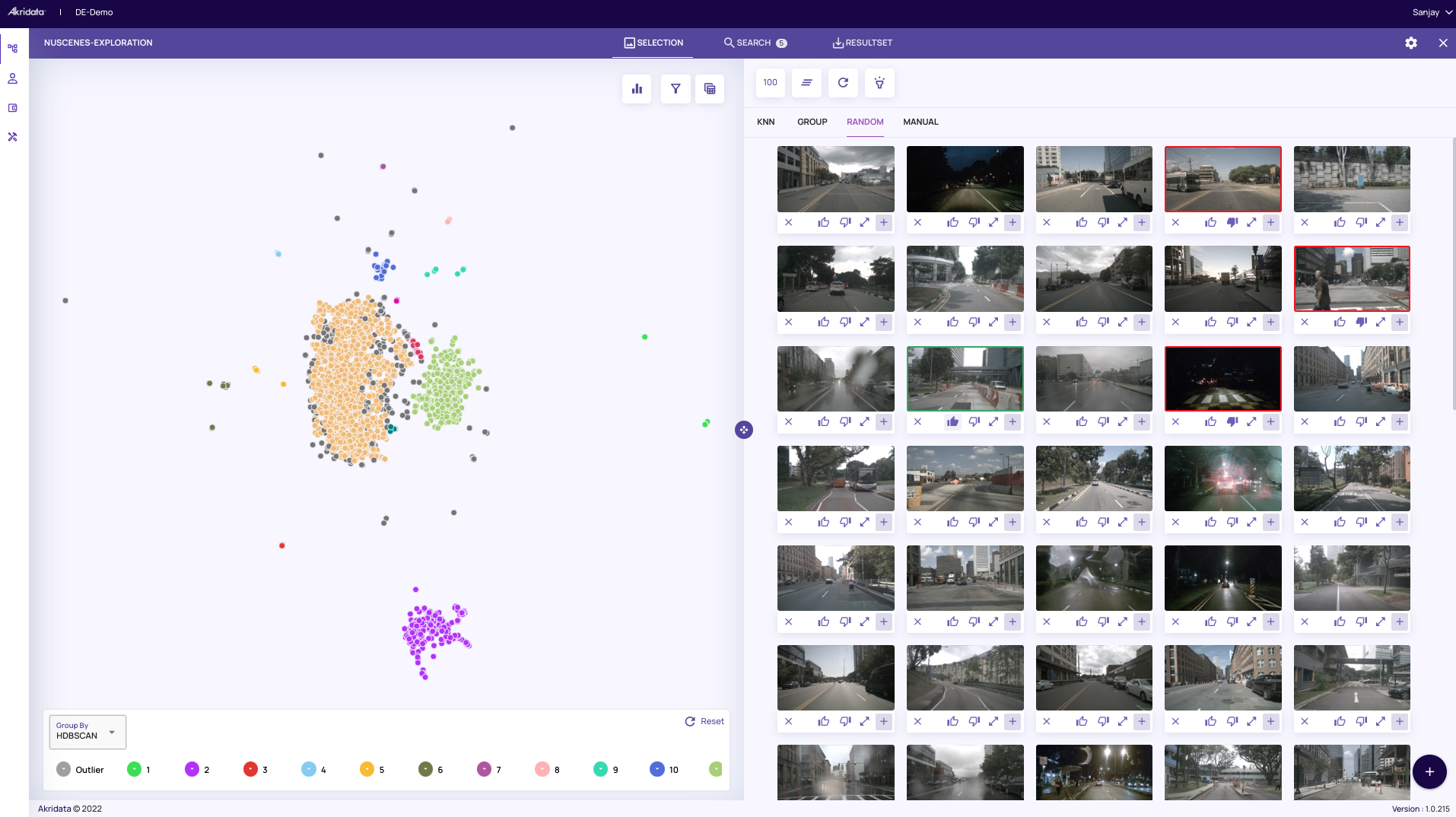

Reviewing the Current Data Set

Let’s take a look at the dataset we have. For the purpose of the illustration, we will refer to the nuScenes dataset. The images on the right are a random sample of the dataset which reflects different scenes – sunny days, traffic lights, pedestrian crossings, night shots, and rainy days.

The images on the right are a random sample of the dataset which reflects different scenes – sunny days, traffic lights, pedestrian crossings, night shots, and rainy days.

Step 2

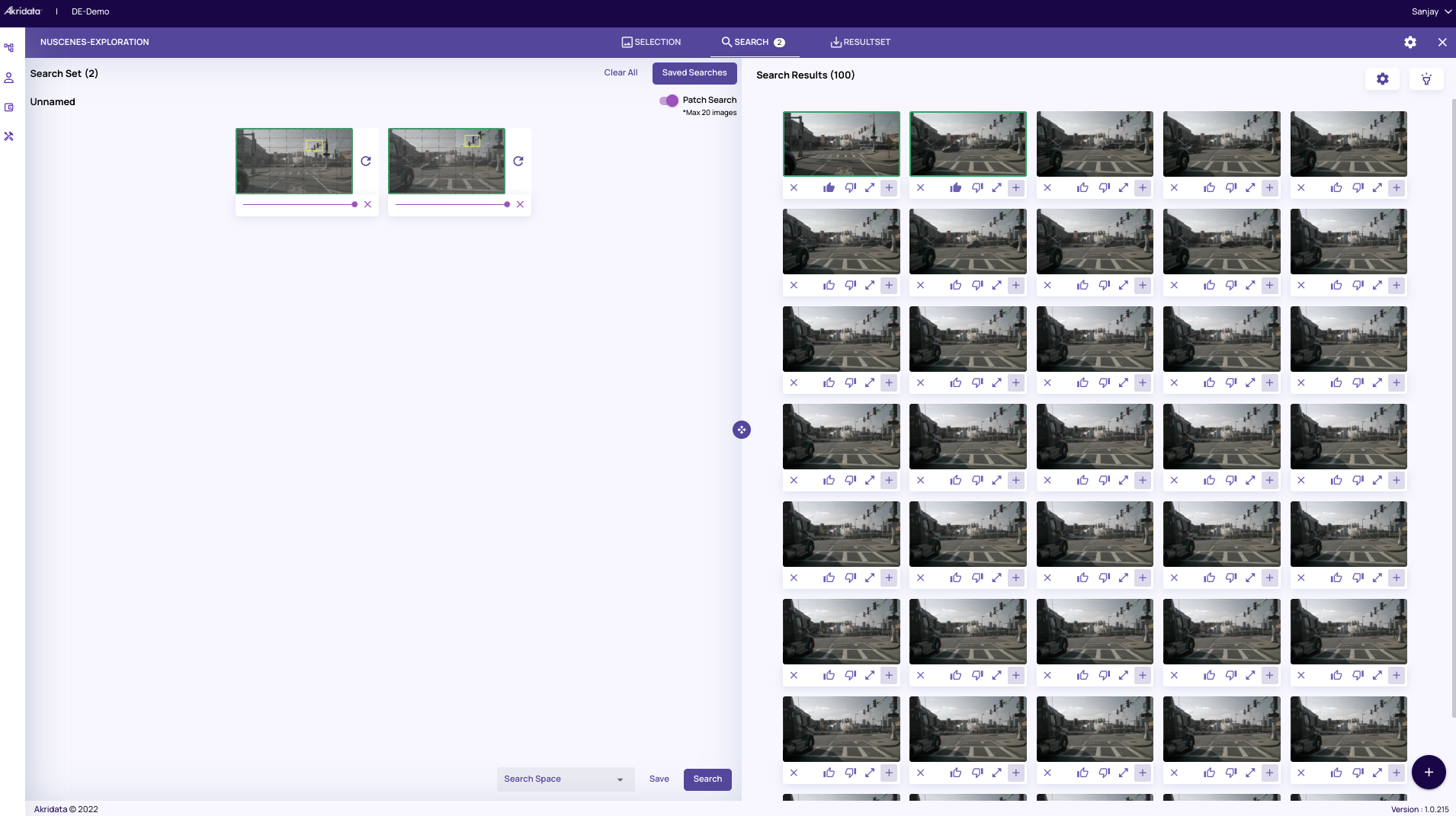

Using Our Patch Search Feature

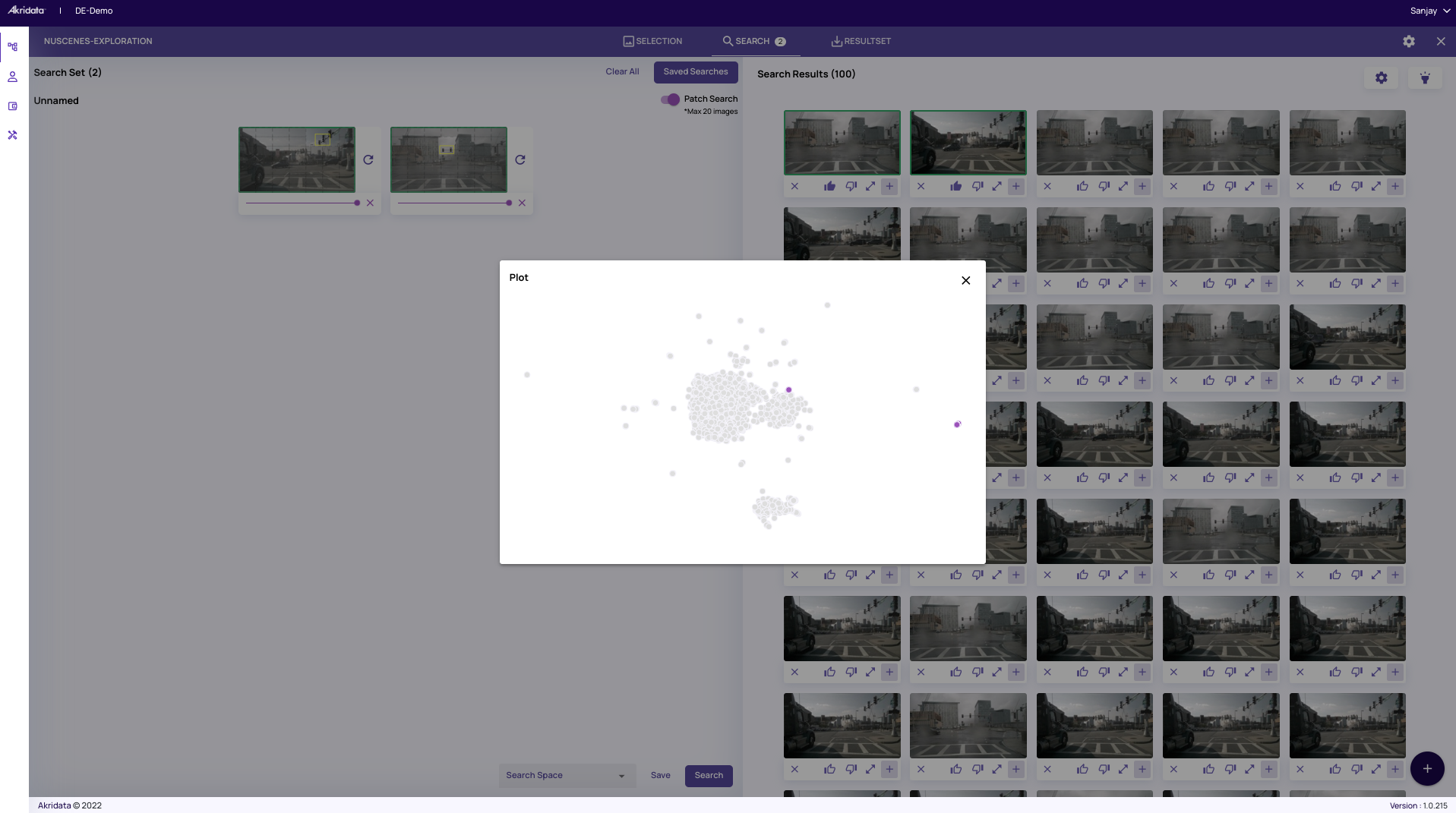

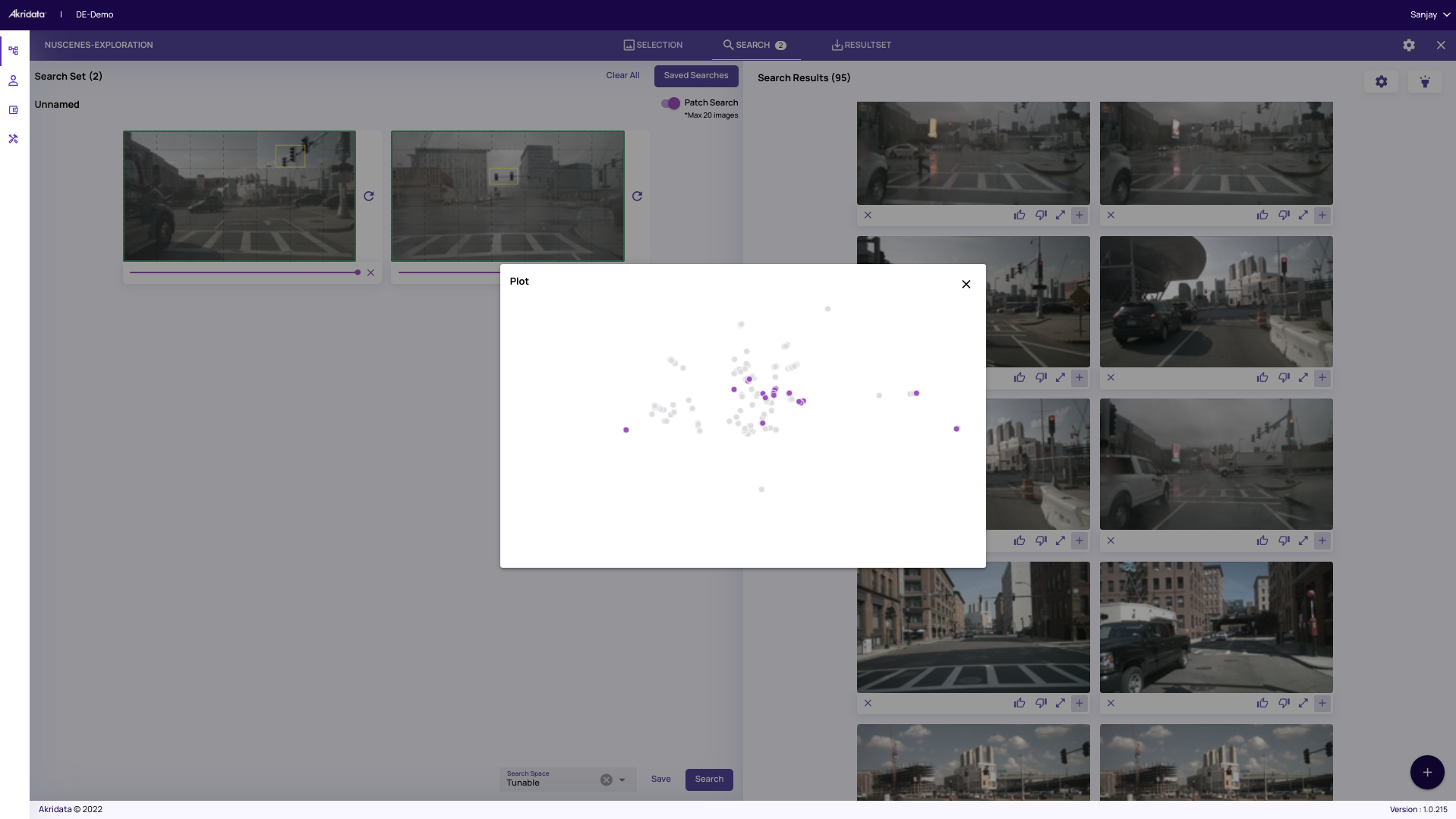

Let’s suppose you want to use our Patch Search feature to find images that have a traffic light. You will see that the search results have faithfully captured the neighboring frames in the video sequence that have the traffic light. As expected, these images are the nearest neighbors in the highlighted cluster map as shown below (by using our flashlight feature)

As expected, these images are the nearest neighbors in the highlighted cluster map as shown below (by using our flashlight feature)

However, you want to give the labelers a dataset that has a diverse representation of the traffic light, not just multiple frames captured at one street corner.

However, you want to give the labelers a dataset that has a diverse representation of the traffic light, not just multiple frames captured at one street corner.Step 3

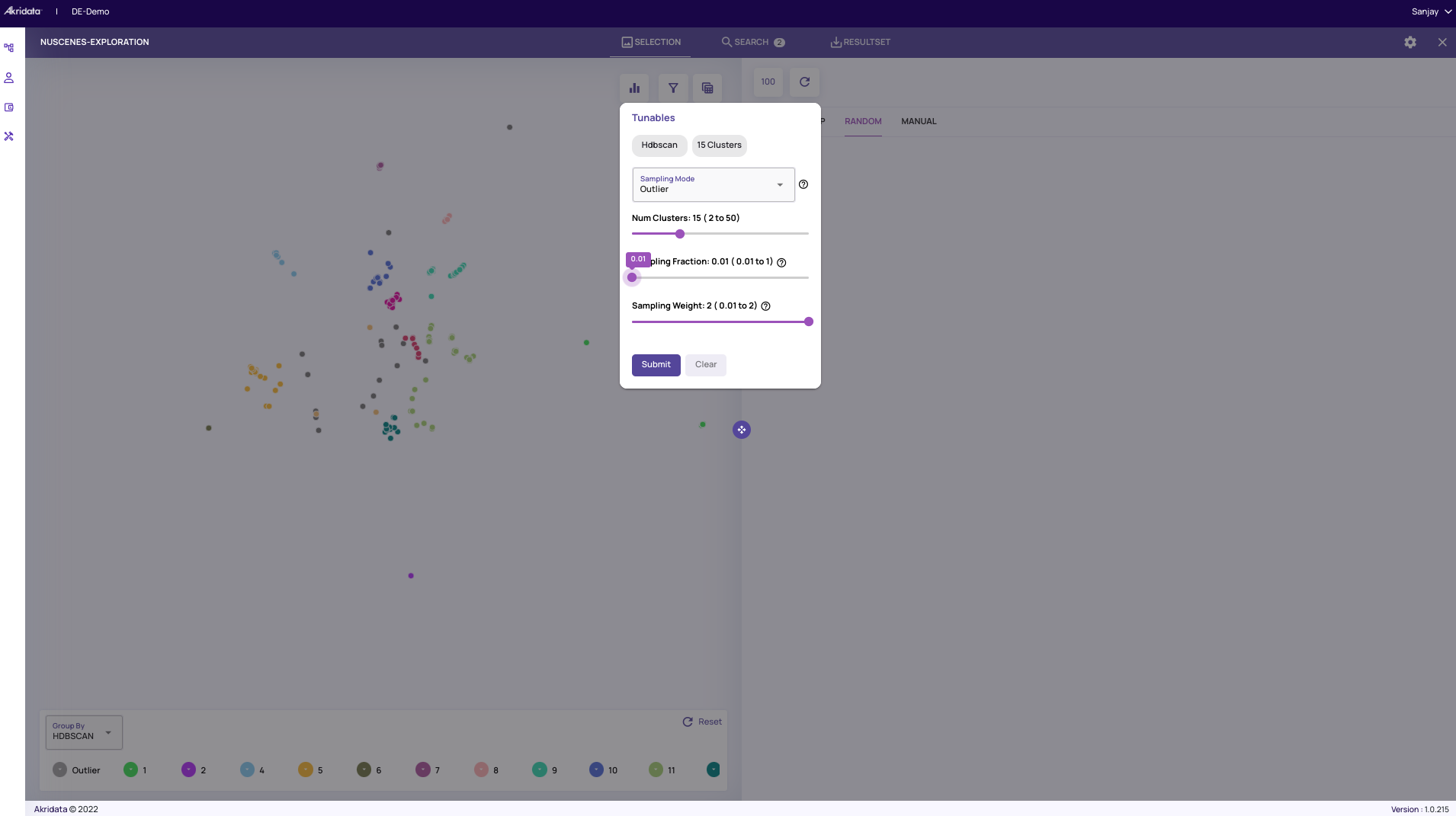

Applying Coreset Sampling

To capture the diversity of scenes that have the traffic light, we can apply Coreset sampling and reduce the dataset in the feature space. As shown below, we select Coreset in the sampling and the sampling fraction to 0.01 (1%) in the Tunables panel.

Step 4

Finding The Traffic Lights

Now we run the same patch search, except that this time we are going to run it on the coreset sampled dataset by selecting “Apply Tunables”. The search results now reflect images that have traffic lights from various scenarios and angles. The search results shown are represented by the highlighted points (using our flashlight feature) in the overall cluster representation of the original dataset (100%).

The search results shown are represented by the highlighted points (using our flashlight feature) in the overall cluster representation of the original dataset (100%).

Get Started with Akridata Data Explorer

Labeling is a slow and expensive process. So it is important to be able to build quality training that will be labeled.

With Akridata Data Explorer, you can exploit the capability of coreset sampling and many other choices of tunables to remove nearly identical frames or adjoining frames and construct a diverse representation of your target image.

Data Explorer allows you to extract relevant information from 1% or any user-defined percent of the dataset while still preserving the underlying representation. With a few clicks, you save yourself a lot of time and money.

Get more bang for your buck on labeling spends. Try Data Explorer today.