Akridata Named a Vendor to Watch in the IDC MarketScape for Worldwide Data Labeling Software Learn More

Unlock AI Model Accuracy with Automated Edge Case Detection

Identify, label, and train rare AI edge cases using Akridata's Visual Data Copilot.

Empower your models to perform flawlessly in real-world scenarios by automating edge case detection, saving time, and enhancing model accuracy.

The Issue

Why Edge Case Detection is Crucial for AI Models

Statement

One of the biggest challenges in AI model training is identifying and labeling rare edge cases. These uncommon scenarios, while infrequent, have a significant impact on the overall performance of your AI models in real-world conditions.

Description

Imagine you’re working with a dataset of 1,000,000 images from autonomous vehicles. Out of these images, only a fraction represents rare events like construction zones. Identifying these instances manually is time-consuming and tedious, but missing them could impair the accuracy of your model. performance.

HOW IT WORKS

How Akridata Solves the Edge Case Challenge

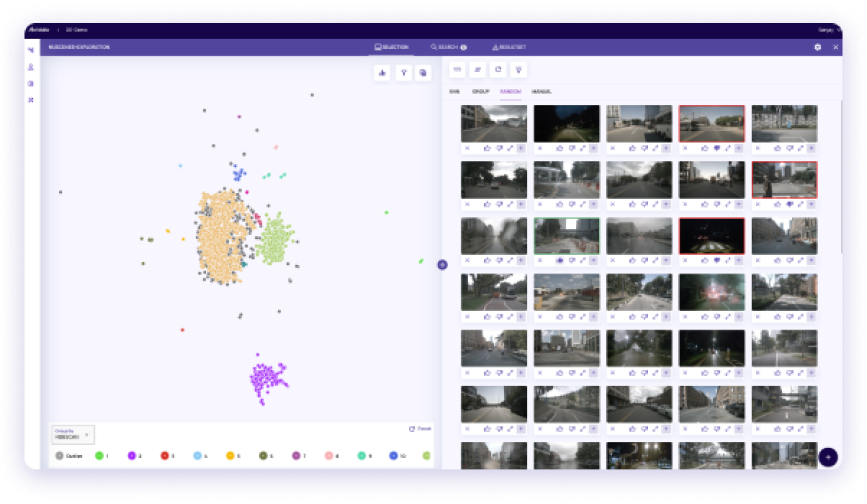

Step 1 Import and Explore Your Dataset

Step 1 Import and Explore Your Dataset

Select the Dataset You Want to Work With

Use Akridata’s Patch Search feature to import and explore your dataset. Whether it’s traffic light data or a construction zone, our tool allows you to capture and cluster images based on critical features.

Explore Your Dataset in Detail

Get a comprehensive view of your data by clustering images into categories, performing actions like sampling or adjusting cluster features to gain deeper insights.

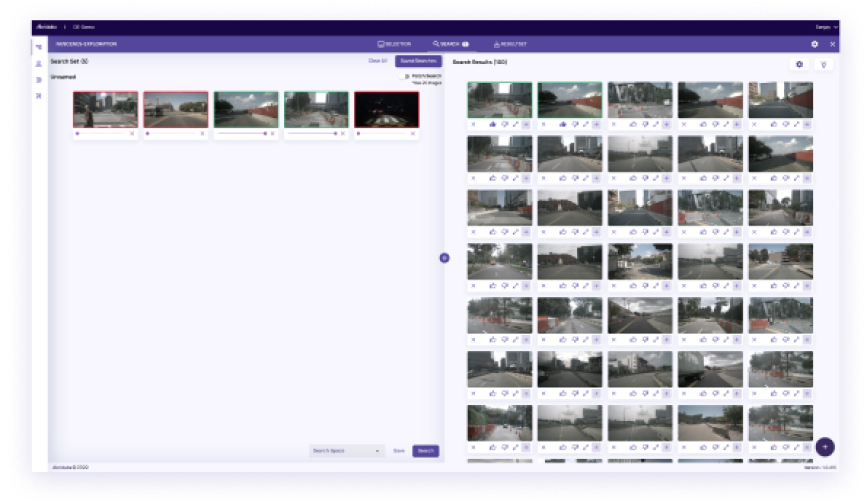

Step 2 Train Your AI with Rare Scenarios

Step 2 Train Your AI with Rare Scenarios

Create Your Novelty Training Set

Once you’ve identified rare scenarios, you can use Akridata’s tool to create a training set. For instance, train your autonomous vehicle model with images that show anomalies like construction zones or obstacles on the road.

Provide Positive and Negative Examples

Label images intuitively based on your needs. Add edge cases like road obstructions for positive reinforcement, while flagging irrelevant data as negative examples, improving the robustness of your AI model.

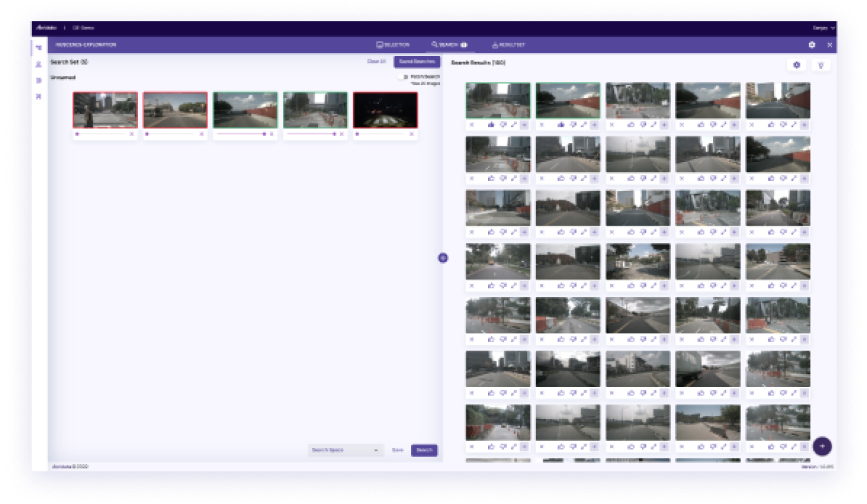

Step 3 Fine-tune Your Results

Step 3 Fine-tune Your Results

Select the Dataset You Want to Work With

Use Akridata’s Patch Search feature to import and explore your dataset. Whether it’s traffic light data or a construction zone, our tool allows you to capture and cluster images based on critical features.

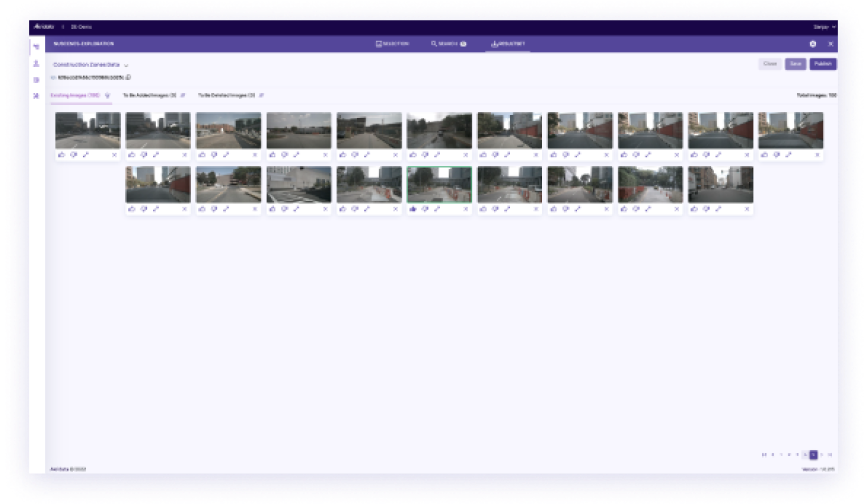

Step 4 Save and Publish Your Results

Step 4 Save and Publish Your Results

Add to or Create a Named Resultset

Create result sets that are easy to manage, label, and export. Organize these sets to share with your team or for further AI model training.

Publish and Share Your Results

Share and publish your resultsets across teams to streamline collaboration. Visual Data Copilot makes it easy to distribute labeled datasets to stakeholders and other AI frameworks.

Why Choose

Akridata’s Data Copilot?

Accelerate AI Model Training

Save time by automatically detecting and labeling rare edge cases, reducing manual effort and allowing faster training cycles.

Improve Model Accuracy

By training with rare scenarios, your AI models perform better in real-world conditions, leading to more reliable and effective results.

Easy Integration and Export

Akridata’s tool integrates seamlessly with existing AI frameworks, enabling quick data exports, labeling, and sharing across teams.

This Autonomous Vehicle Company Increased Model Accuracy by 35%

An autonomous vehicle company improved its AI model accuracy by 35% after integrating Akridata’s Visual Data Copilot. By identifying and labeling rare scenarios, such as construction zones and unusual traffic patterns, the team significantly enhanced the model’s real-world performance. more accurate AI model that performed well in varied driving conditions.

Key Outcomes:

- 40% Reduction in Dataset Curation Time

- More Diverse and Representative Training Set

- Improved Model Accuracy by 30%

Features of Akridata Visual Data Copilot

Advanced Dataset Management

- Classify and cluster images from large datasets based on customizable criteria.

- “Use powerful tools to group and label rare events like road hazards or production anomalies.

Positive and Negative Feedback Loop

Label edge cases with intuitive tools that enable you to train your models on both positive and negative examples, ensuring comprehensive learning.

Seamless Integration

Export labeled datasets easily into your existing AI framework. Collaborate across teams with shared resultsets for faster AI training and deployment.

FAQs

Al edge case detection involves identifying rare, uncommon scenarios in datasets that can significantly affect model performance. Detecting these edge cases is crucial for improving Al model accuracy in real-world conditions.

Akridata’s Data Explorer uses advanced clustering and filtering techniques to automatically identify and label rare scenarios in AI datasets, saving time and enhancing model training for better accuracy.

Yes, by training AI models on rare scenarios like edge cases, Akridata’s Data Explorer helps improve the overall accuracy and reliability of models in real-world applications.

Akridata’s automated dataset labeling tool reduces manual effort by detecting and labeling edge cases, accelerating the AI training process and shortening time-to-deployment.

Akridata’s Data Explorer seamlessly integrates with popular AI frameworks, allowing for quick dataset exports, easy sharing of labeled datasets, and smooth collaboration across teams.

Ready to Boost AI Model Accuracy?

Start identifying rare edge cases and enhance your AI model’s performance today.