Akridata Named a Vendor to Watch in the IDC MarketScape for Worldwide Data Labeling Software Learn More

Revolutionize Manufacturing Data Management with Akridata’s Smart Tiering

Edge-To-Cloud Smart Tiering For Manufacturing

The Issue

Challenges in Manufacturing Data Management

Computer Vision applications and digital twins generate massive volumes of data, requiring efficient edge-to-cloud tiering solutions for scalable storage and processing.

What exactly is the issue?

- Modern manufacturing systems use multiple camera sensors for both coarse-grained and fine-grained monitoring.

- Data applications include predictive maintenance, safety assurance, and warranty claims, producing terabytes to petabytes of image data.

- Challenges:

- Scalability: Managing massive datasets across edge sites, regional centers, and cloud storage.

- Cost-Efficiency: Reducing archival costs while ensuring quick access to required data.

- Latency: Maintaining reasonable latency during data access from multiple storage tiers.

The Akridata Solution

Smart Ingest + Smart Tiering for Manufacturing

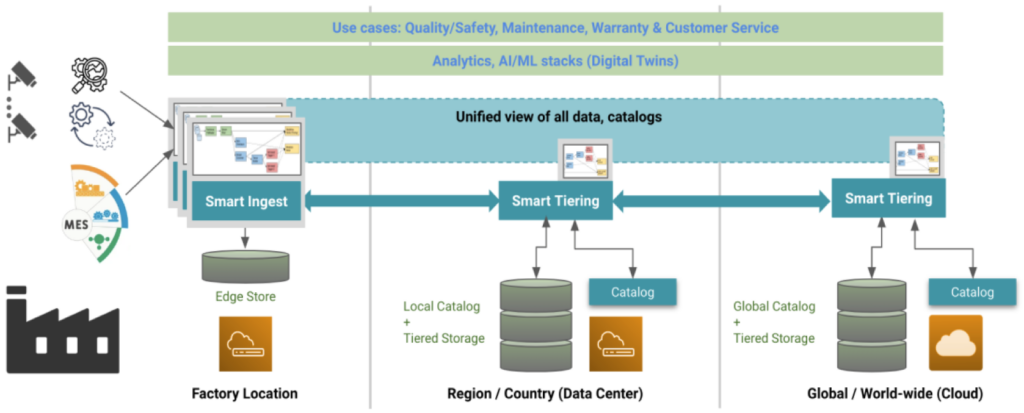

Unified View of Data Processing and Storage – From Edge to Global Cloud with Smart Ingest and Tiering Components.

Smart Ingest Features

- Consolidates sensor data with Manufacturing Execution System (MES) components.

- Supports agile data pipelines for transforming and transferring data.

- Key Benefits:

- Unlimited object handling for massive scalability.

- Flexible migration policies (cloud, on-prem, or hybrid storage).

- Optimized data transfer via intelligent sub-folder and metadata prioritization.

Smart Tiering Features

- Implements tiered storage policies for edge, regional, and cloud-based processing.

- Examples of Tiering Policies:

- Standard Policies: Archive data with low access frequency (e.g., <10 accesses in 6 months).

- Catalog-Driven Policies: Uniformly manage data captured by specific sensors or locations.

- Use-Case-Specific Policies: Store low-res replicas in Tier 1 storage and full-res data in archival tiers.

Why It Matters

Benefits of Edge-to-Cloud Smart Tiering

Improved Performance

- Reduce latency with intelligent caching in front of archival tiers.

- Quickly access prioritized data for analytics and machine learning tasks.

Cost Efficiency

- Leverage flexible storage solutions to cut archival costs by storing low-priority data in cheaper storage tiers.

Scalability

- Seamlessly scale storage and access across petabytes of manufacturing data.

Key Features of Akridata Smart Tiering

Streamlining Data Storage and Access

- Transparent caching for frequently accessed data.

- Metadata-driven cataloging for quick identification and retrieval.

- Metadata-driven cataloging for quick identification and retrieval.

Deployment Benefits

Why Choose Akridata Smart Tiering?

- 10x Faster Data Access: Improved retrieval times for low-latency workflows.

- 50% Cost Reduction: Through policy-driven tiering and archival storage.

- Scalable Deployment: Flexible deployment across edge sites, regional centers, and the cloud

Trusted by Leaders in Technology

Partner with Akridata for Scalable Manufacturing Solutions

- Accelerate AI model development with seamless data workflows.

- Simplify compliance and warranty processes with optimized storage management.

FAQs

Smart Tiering efficiently stores and transfers massive datasets using policy-based tiering, reducing costs while improving access times for manufacturing workflows.

Akridata automates data validation, transformation, and prioritization using workflows. It captures relevant subsets of data and transfers them efficiently to reduce resource consumption and accelerate analytics.

Deploy across on-premises, hybrid, or fully cloud-based infrastructures to meet manufacturing needs.

Yes, it supports petabyte-scale data with flexible tiering and metadata-driven access.

Akridata uses user-defined schemas for cataloging and indexing data, enabling rapid retrieval of subsets based on attributes like timestamps, sensor types, or usage.